Skill ratings in candidate reports

Understanding the automated skill ratings in each candidate's report

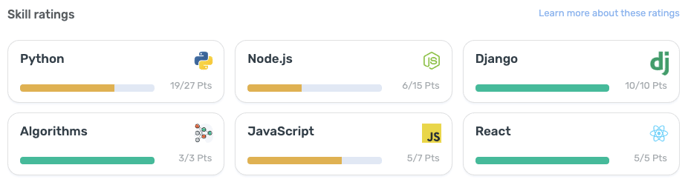

In a candidate report, you will see a section for Skill ratings.

These results are automatically generated based on the skills and technologies covered in challenges and questions across the assessment. It measures the candidate's skills by calculating their weighted scores across challenges and multiple choice questions.

Benchmark data

Once enough candidates take an assessment, you may see an average score next to the candidate's final score. You will also see a qualifying score which is the threshold you set when creating an assessment to determine the benchmark for qualified candidates.

Scorecard

On a candidate report, aside from our system grading your candidates solutions and providing you a final score, you are able to rate candidates yourself across a set of skills in their scorecard and leave private notes. Read more about the scorecard here.